Trusted By Leading Organizations for Over 40 Years

Transforming Data Security with PKWARE

Traditional security methods like DLP defend the perimeter and block user actions but don’t protect the data itself. And DLP doesn’t provide visibility into encrypted data at rest or when it leaves the organization. This leads to manual intervention and user workarounds that create shadow IT.

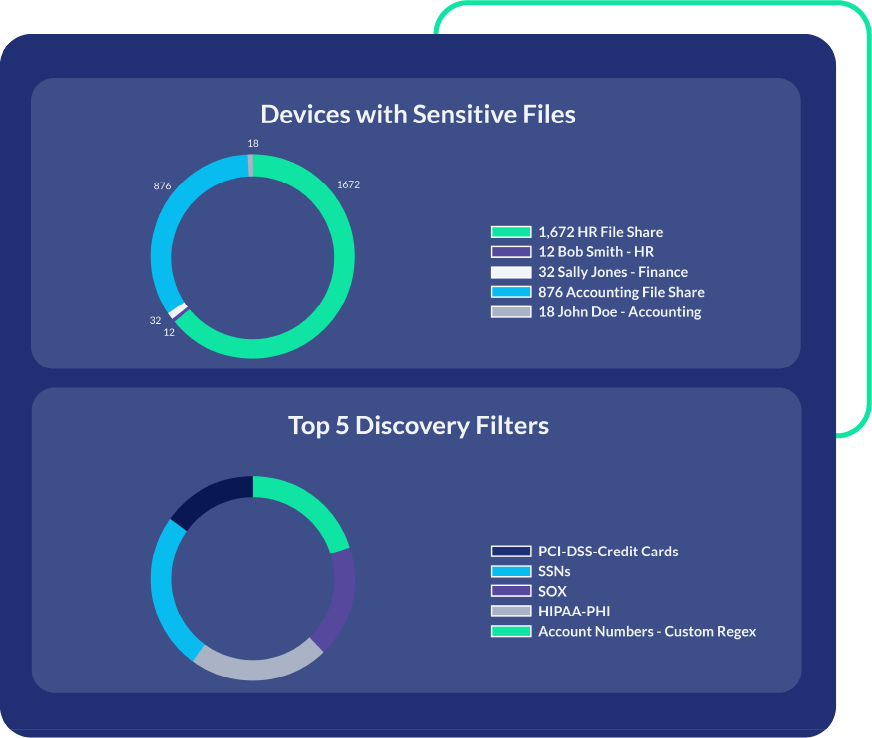

PK Protect takes a data-centric approach. It inventories data based on content and provides policy-driven options to securely share sensitive information. This provides security without impacting productivity.

Security That Follows Your Data Everywhere

Data-Centric Features to Support Compliance and Minimize Risk